Feb 28, 2023

Merkle Tree based Proof of Storage

Hyperspace, our FYP project uses merkle trees for proof of storage, this article explains the technical stuff behind the storage proof of Hyperspace . This article assumes you’ve basic understanding of merkle trees. You can learn about merkle trees from this article or from this Youtube video.

Merkle tree is popularly used in blocks hash computation from transaction hashes. Any party can check if a block includes a certain transaction by a merkle path and transaction data. The interesting part here is that size of merkle path is log2n where n is the number of leaves in the tree. If we’ve 1 million transactions we’ll have only log2(1,000,000) = 20 leaves! in the merkle path.

Merkle Path as Proof

This interesting property of merkle path size allows us to build an efficient proof system for data verifications. The proof is generated by storage node to satisfy the clients that their data is still safe, here’s Hyperspace strorage node example ( try changing “index” query parameter). The proof is verified by clients, if storage node fails to generate proof or the proof is not valid, a smart contract punishes the storage node by deducting fine from their balance and refunding the user 2x of the fee they paid as reimbursement (We’ll see how in another article). Here’s an example of generated proof by storage node.

{

"root": "b456c4314c52024d1de21b307e6b586427069b93f0eb6420e88611faf9c83c4a",

"segment_index": 20,

"segments_count": 683,

"proof": [

"703b2c5a6d19387c2acd39d3ddd51024c4b0eabbb6e44897ea78b9455b5feae2",

"d3d7fb65b54647f63121641d3a7ae58f81ad97f5d4dc5fe0c7ce34b5c902d796",

"893bcd87e89f329919787b5dd6c41fd79e42d6d477c54d414d79ecfb79efcd6f",

"8cde4cffc5207966a4659b6759b579c07c75b4223b51a189841d5bf97362c4a1",

"2f62a157c6b3e4f0bb3f1fd61cb036f9c09bf5de8e2b670bae34fd6601b7390d",

"5c2c08a14e62501ac59d5e1fa42e35000d22bd87b9f04d0ccd0ad36db6ddc4e1",

"5e4b4d72601bce80f02ae2d3146ca250dd33045f2a244ac9ddfd98bd7db607e2",

"c90b3bc273b89814dc2c066c87a3e512aeec0ab4d4ae08de88ca64445e29ef8a",

"506598e4a09198634a0041c2b10431aaa9096ef00b0b7e47cfa457e11ff5025b",

"8d1e33ade261ae3f59cd3a619c0efa5290f0dc9e277ce0034b5b918a39acd400",

"9bd45d86ad6e95e38d9bdc010c09e3800c75106359aa9feca457eaccee8d66f4"

],

"directions": [0, 1, 1, 0, 1, 0, 1, 1, 1, 1, 1],

"data_base64": "..."

}

The “proof” array represents merkle path along with “directions”. Let’s dig into the details.

Data segments as Merkle leaves

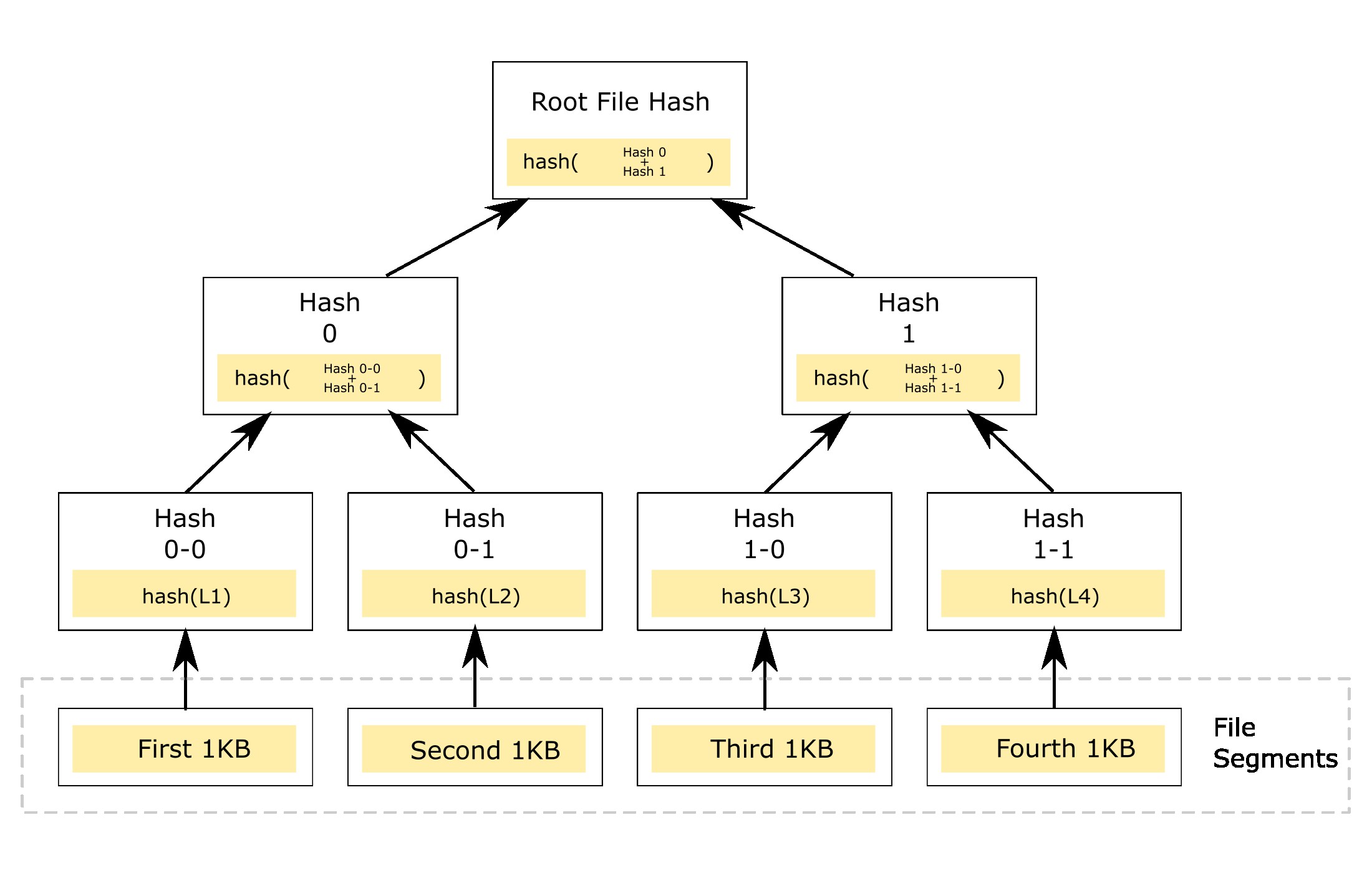

As merkle leaves are just the hashes of data, we can divide our file into multiple segments and compute their hashes to be used as merkle leaves. Suppose we’ve file with 4KB size. We can logically divide our file into 4 segments, each segment with 1KB of size. Each segment is then hashed to form a merkle leave, and then we form regular merkle tree as visualized below.

Note that this is logical division, when creating a leave we can use data offsets to feed the hash algorithm like the following code statement.

Note that this is logical division, when creating a leave we can use data offsets to feed the hash algorithm like the following code statement.

// We're not moving any data, just referencing a slice

leaf = keccak256(fileData[0:1024]) // hash of first segment

The client/customer facing app, computes the merkle root first and then uploads the to storage node along with merkle root. The storage node computes the merkle root on its side, and passes this merkle root along with other data to the contract. The client also passes the same root and some other parameters to the contract along with hosting fee. As we can see in the following snippet.

function concludeTransaction(

CallerType callerType,

address userAddress,

bytes32 merkleRootHash,

uint32 fileSize,

uint256 timerStart,

uint256 timerEnd,

uint64 proveTimeoutLength,

uint64 concludeTimeoutLength,

uint32 segmentsCount,

uint256 bidAmount

) public payable {...} // Snippet from Hyperspace smart contract

The concludeTransaction must be invoked by both client and node and their file parameters must match. If the roots mismatch i.e the user submitted wrong root or the storage node passes wrong root, this function reverts and no fee deduction is made.

Requesting and Generating Proof

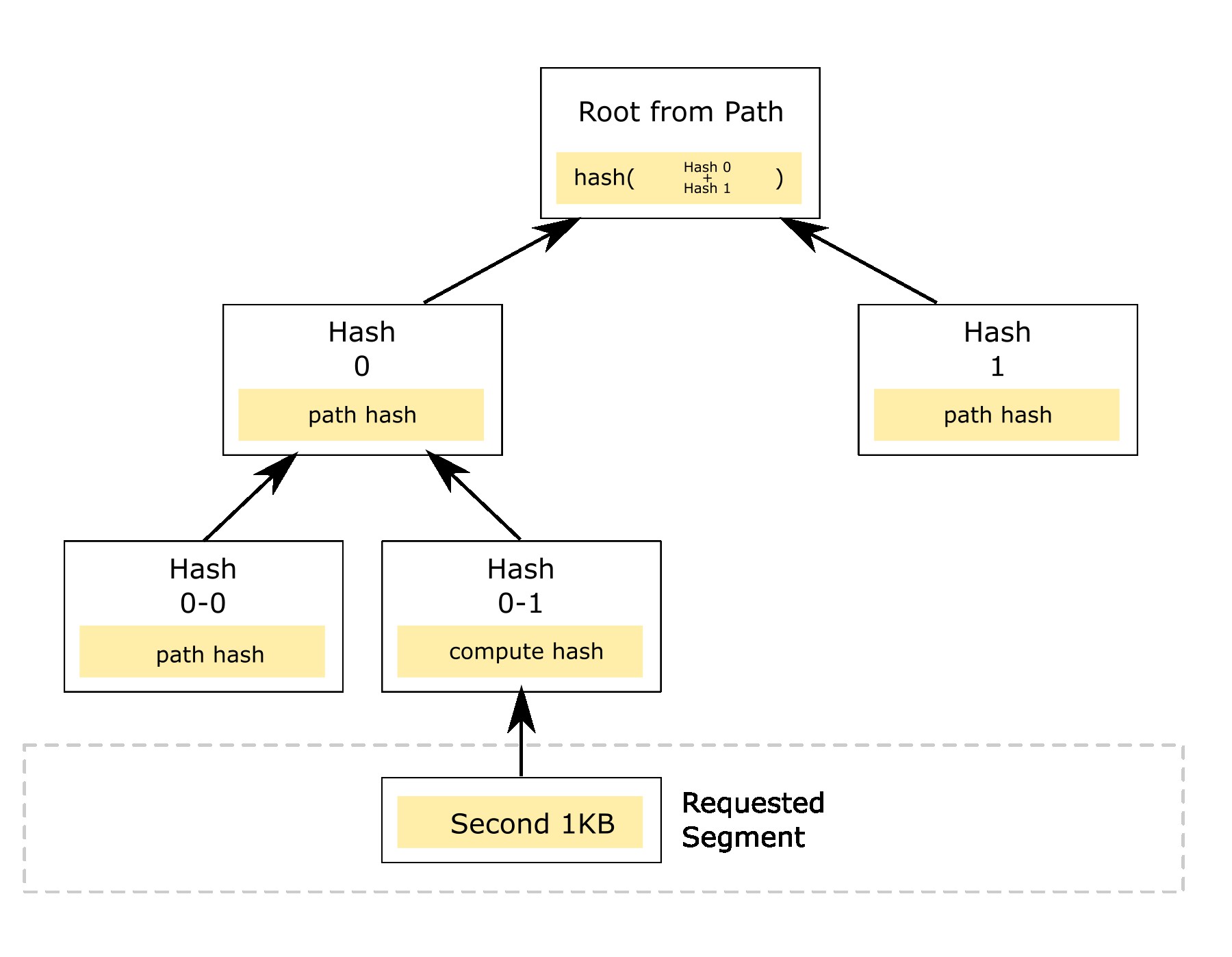

In case of uncertainty about our file integrity. We can request storage node to generate a storage proof with given segment index along with that that segment data. Suppose we request a proof with second segment (i.e segment with index 1). The storage node generates a merkle path and responds with merkle path and second segment data which in our case is 1KB in size.

We compute the hash of the second segment data, and replace this hash in the merkle path, we then verify if the generated root is equal to the one provided by storage node. If they match, it means that our file is still valid and safe.

Randomisation of Segment Index

The segment index we request must be random, if we program our client software to only request first or last segment to verify file integrity, the faulty storage node may only store that 1KB segment data and merkle path to it without storing the actual file, thus passing the proof of storage without actually storing the file.

Invalid Proof and smart contract

In case the storage node fails to provide the correct proof i.e the path it means the storage node has lost the data. In this case we invoke smart contract method validateStorage asking for proof validity. Following is concise snippet from contract.

function validateStorage(

address userAddress,

bytes32 fileRootHash,

uint32 segmentIndex

) public {

bytes32 ref = computeKey(userAddress, fileRootHash);

Transaction storage t = transactionMapping[ref];

...

emit EvProveStorage(

userAddress,

fileRootHash,

(block.timestamp),

(block.timestamp + uint256(t.proveTimeoutLength)),

segmentIndex

);

}

The smart contract generates an event EvProveStorage to which storage node must respond by submitting correct proof to smart contract through processValidation method invocation in limited time (proveTimeoutLength). Since the segment size is only 1KB and merkle path size is also small, the smart contract can verify the proof on-chain. Here’s concise snippet.

function processValidation(

address userAddress,

bytes32 rootHash,

bytes calldata data,

bytes32[] calldata proof

) public returns (bool) {

bytes32 ref = computeKey(userAddress, rootHash);

Transaction storage t = transactionMapping[ref];

require(

block.timestamp < (t.validationRequestTime + t.proveTimeoutLength),

"validation window expired"

);

uint32 segmentInd = t.validationSegmentInd;

bytes32 leafHash = keccak256(data);

uint8[] memory array = new uint8[](1);

bool isValid = verify(proof, rootHash, leafHash, segmentInd, array);

require(isValid == true, "invalid proof");

emit EvValidationSubmitted(

userAddress,

rootHash,

t.validationSegmentInd,

block.timestamp

);

}

Insurance and Reimbursements

If the time limit is expired for proof submission or the submitted proof is not valid. The client can call validationExpired method of smart contract, the smart contracts transfers 2x of the the fee to the client as an insurance reimbursement for the loss of data. The second half of the reimbursement is balance, which storage node is required transfer to contract as collateral during file upload. Thus losing the collateral in case it loses the file. The working of validationExpired is shown below.

function validationExpired(address userAddress, bytes32 rootHash) public {

bytes32 ref = computeKey(userAddress, rootHash);

Transaction storage t = transactionMapping[ref];

...

require(

block.timestamp > (t.validationRequestTime + t.proveTimeoutLength),

"validation window not expired"

);

uint256 transferAmount = t.bidAmount * 2; //twice

lockedCollateral -= transferAmount;

payable(userAddress).transfer(transferAmount);

emit EvValidationExpired(userAddress, rootHash, block.timestamp);

...

}

Conclusion

The proof of storage based on merkle trees and using smart contract as arbitrator is perfect combination. This proof is simple and efficient, not only client, any 3rd party can request a proof from storage node, and since the data on storage node is end-to-end encrypted by default, 3rd parties can only verify the proof but can’t access data. This concludes our article about the proof of storage system we’ve build in Hyperspace. I’ll publish a detailed article on Hyperspace itself soon but it’s technical specs can’t be explained in single article so articles like this explain this sort of stuff.